Quality of Service (QoS): Understanding The Basics

Contents

What is Quality of Service (Qos)

Quality of Service refers to traffic prioritization and resource reservation control mechanisms. It represent the ability to provide different priorities to different applications, data flows in order to guarantee a certain level of performance to a data flow.

Quality of service is particularly important for the transport of traffic with special requirements. In particular, developers have introduced Voice over IP technology to allow computer networks to become as useful as telephone networks for audio conversations, as well as supporting new applications with even stricter network performance requirements.

Use cases:

For example, a required bit rate, delay, delay variation, packet loss or bit error rates may be guaranteed. Quality of service is important for real-time streaming multimedia applications such as voice over IP, multiplayer online games and IPTV, since these often require fixed bit rate and are delay sensitive. Quality of service is especially important in networks where the capacity is a limited resource, for example in cellular data communication.

QoS Approaches

There are two principal approaches to QoS in modern packet-switched IP networks, a parameterized system based on an exchange of application requirements with the network, and a prioritized system where each packet identifies a desired service level to the network.

- Integrated services (“IntServ”) implements the parameterized approach. In this model, applications use the Resource Reservation Protocol (RSVP) to request and reserve resources through a network.

- Differentiated services (“DiffServ”) implements the prioritized model. DiffServ marks packets according to the type of service they desire. In response to these markings, routers and switches use various scheduling strategies to tailor performance to expectations. Differentiated services code point (DSCP) markings use the first 6 bits in the ToS field (now renamed as the DS field) of the IP(v4) packet header.

Types of QoS:

- Ingress QoS: This refers to controlling the incoming traffic into the network. It is used to control and prioritize traffic before it enters the network, and ensure that only authorized and well-behaved traffic is allowed in.

In this case, the marking policy is applied to the packets that are leaving the interface and being sent out to the network. It implies that the policy is being applied to the outbound traffic, which is leaving the router and heading towards its destination.

It is used when you want to enforce a specific quality of service (QoS) policy on the incoming traffic, before it is processed and forwarded by the router.

For example, if you have a service provider network and you are providing a guaranteed level of service to your customers, you might want to enforce a specific QoS policy on the incoming traffic from each customer, to ensure that their traffic is treated according to their service agreement.

By applying a marking policy on the input traffic, you can classify the traffic into different classes, mark the packets with the appropriate QoS markings, and apply the appropriate queuing, shaping, and congestion management techniques, to provide a guaranteed level of service to each customer.

Another example is when you have a network that includes multiple routers and you want to ensure that the end-to-end QoS policy is consistent throughout the network. By applying a marking policy on the input traffic on each router, you can maintain the QoS markings and enforce the same QoS policy at every hop in the network, to ensure a consistent level of service end-to-end.

- Egress QoS: This refers to controlling the outgoing traffic from the network. It is used to ensure that traffic leaving the network is given the appropriate priority and bandwidth, and to prevent congestion from occurring outside the network.

It is used when you want to enforce a specific quality of service (QoS) policy on the outgoing traffic, after it has been processed and forwarded by the router.

For example, if you have a router that is connected to multiple networks and you want to provide a different level of service to each network, you might want to apply a different marking policy on the output traffic for each network. By applying a marking policy on the output traffic, you can classify the traffic into different classes, mark the packets with the appropriate QoS markings, and apply the appropriate queuing, shaping, and congestion management techniques, to provide a different level of service to each network.

Another example is when you have a network that provides different services to its customers and you want to ensure that the traffic for each service is treated differently. By applying a marking policy on the output traffic, you can classify the traffic into different classes, mark the packets with the appropriate QoS markings, and apply the appropriate queuing, shaping, and congestion management techniques, to provide a different level of service to each service.

In general, the use case for applying a marking policy on the output traffic is when you want to enforce a specific QoS policy on the outgoing traffic, to ensure that it is treated appropriately and given the right priority in the network.

QoS Mechanisms and Toolset

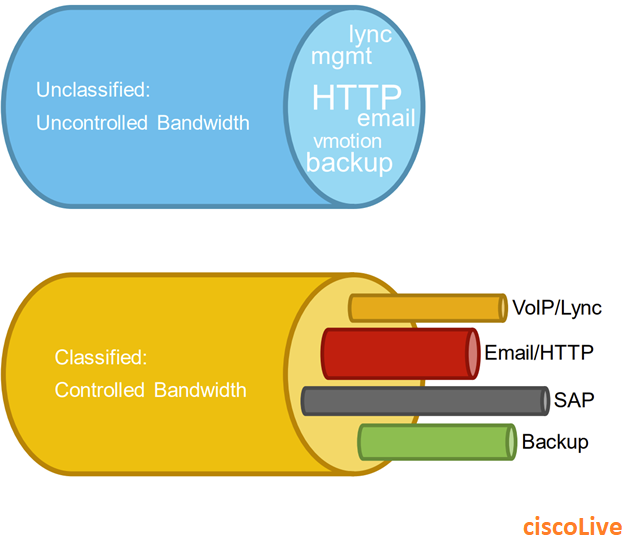

Classification

Network traffic is classified into different categories based on its type, source, and destination. For example, video and voice traffic may be considered higher priority than email and file transfers.

Marking

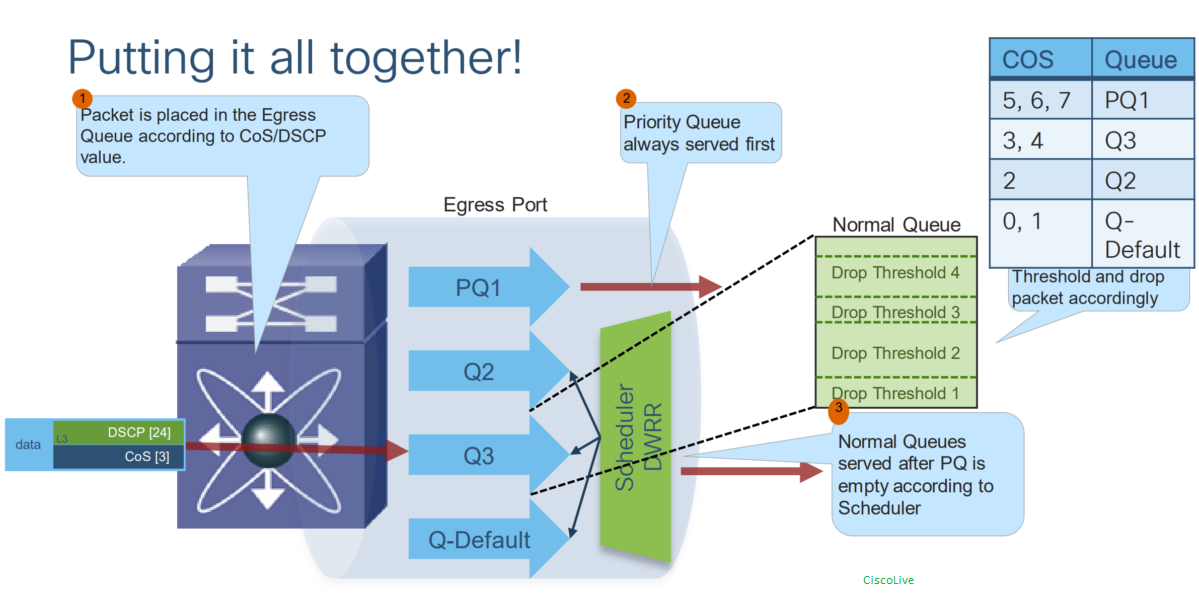

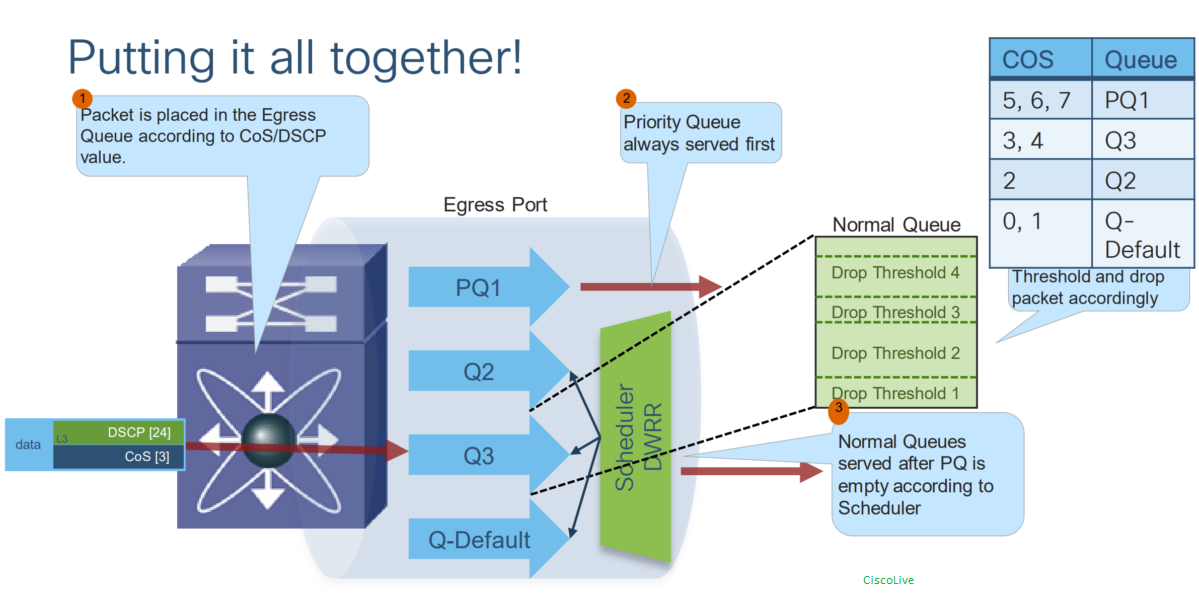

Once traffic is classified, it is marked with a priority level to ensure it is handled appropriately. This can be done using different mechanisms such as Differentiated Services Code Point (DSCP) or Class of Service (CoS) values.

Queuing:

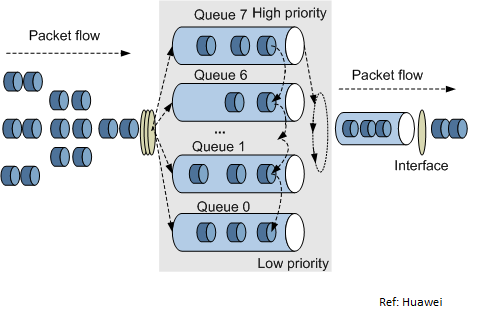

Once traffic is marked, it is placed in different queues based on its priority level. Queuing techniques can vary based on the network device and the queuing algorithm used.

Traffic queuing is the ordering of packets flows and it can be applied to both input and output of data.

Scheduling

Traffic is then scheduled for transmission based on its priority level and the network conditions.

Congestion Avoidance

Used to prevent network congestion by proactively dropping packets before network congestion occurs. This is done by monitoring the network and adjusting the packet transmission rate to prevent the network from becoming overloaded.

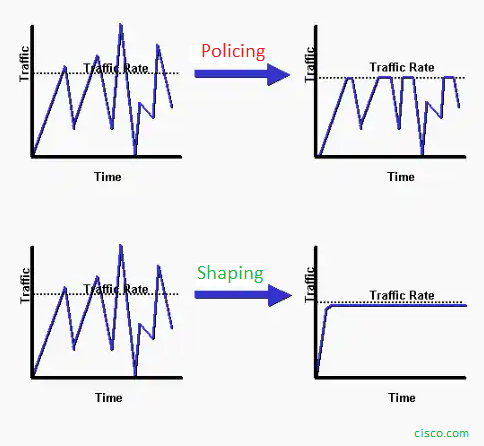

Policing and Shaping

QoS policing involves monitoring the rate of traffic flow and dropping packets that exceed a certain rate. Policing can be applied to specific traffic types or to all traffic on a network.

QoS shaping involves controlling the rate of traffic flow by delaying or buffering excess traffic. This is done by queuing packets and releasing them at a controlled rate to prevent network congestion. Shaping can be used to smooth out bursts of traffic and to ensure that network bandwidth is used efficiently.

I- Classification and Marking

Network traffic entering can be inspected and classified according to many different parameters in incoming packets, such as source address, destination address or traffic type and assign individual packets to a specific traffic class:

Note: Traffic classifiers may reserve any DiffServ markings in received packets or may elect to ignore or override those markings.

QoS Marking: CoS vs DSCP:

- Layer 2 Qos (CoS: Class of Service):

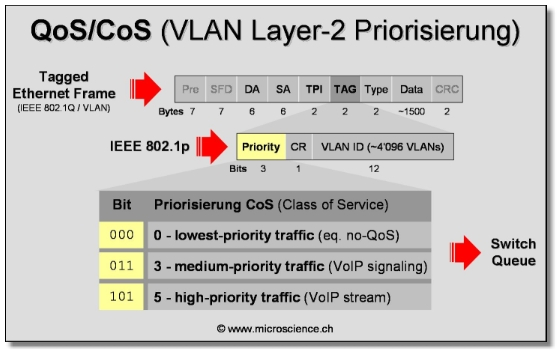

Layer 2 Ethernet switches rely on 802.1p standard to provide QoS. The standard 802.1p is part of the IEEE 802.1Q which defines the architecture of virtual bridged LANs (VLANs). This architecture uses tagged frames inserted in Ethernet frames after the source address field. One of the tag fields, the Tag Control Information, is used by 802.1p in order to differentiate between the classes of service.

QoS provisioning in Layer 2 is very important to networks that are primarily based on Layer 2 infrastructure as it is the only way to provide QoS on the network. Furthermore, networks based on both Layer 2 and Layer 3 network devices could benefit from a more integrated approach in end-to-end QoS provisioning that includes both Layer 2 and Layer 3.

- Layer 3 QoS (DSCP):

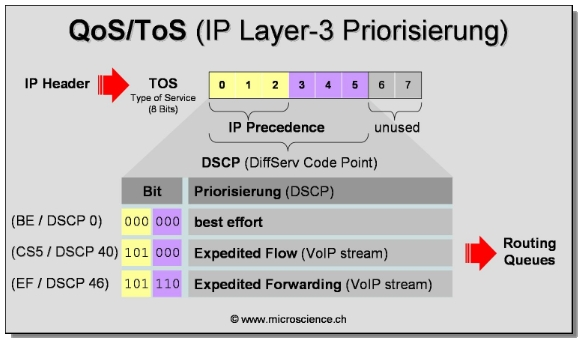

The per-hop behavior is determined by the DS field in the IP header. The DS field contains the 6-bit DSCP value.

Use of IP Precedence allows you to specify the class of service (CoS) for a packet. You use the three precedence bits in the type of service (ToS) field of the IP version 4 (IPv4) header for this purpose.

Using the ToS bits, you can define up to six classes of service. Other features configured throughout the network can then use these bits to determine how to treat the packet. These other QoS features can assign appropriate traffic-handling policies including congestion management strategy and bandwidth allocation.

For example, although IP Precedence is not a queueing method, queueing methods such as weighted fair queueing (WFQ) and Weighted Random Early Detection (WRED) can use the IP Precedence setting of the packet to prioritize traffic. By setting precedence levels on incoming traffic and using them in combination with the Cisco IOS QoS queueing features, you can create differentiated service.

Explicit Congestion Notification (ECN) occupies the least-significant 2 bits of the IPv4 TOS field and IPv6 traffic class (TC) field.

The DSCP value is encoded in 6 bits, So, in theory, a network could have up to 26= 64 different traffic classes using the 64 available DSCP values. The DiffServ RFCs recommend, but do not require, certain encodings (called per-Hop Behaviors). This gives a network operator great flexibility in defining traffic classes.

Per-Hop Behaviors (PHB):

The following are the common per-hop behaviors (PHB):

- Default Forwarding (DF) PHB: which is typically best-effort traffic

- Expedited Forwarding (EF) PHB : dedicated to low-loss, low-latency traffic

- Assured Forwarding (AF) PHB: gives assurance of delivery under prescribed conditions

- Class Selector PHBs: which maintain backward compatibility with the IP precedence field.

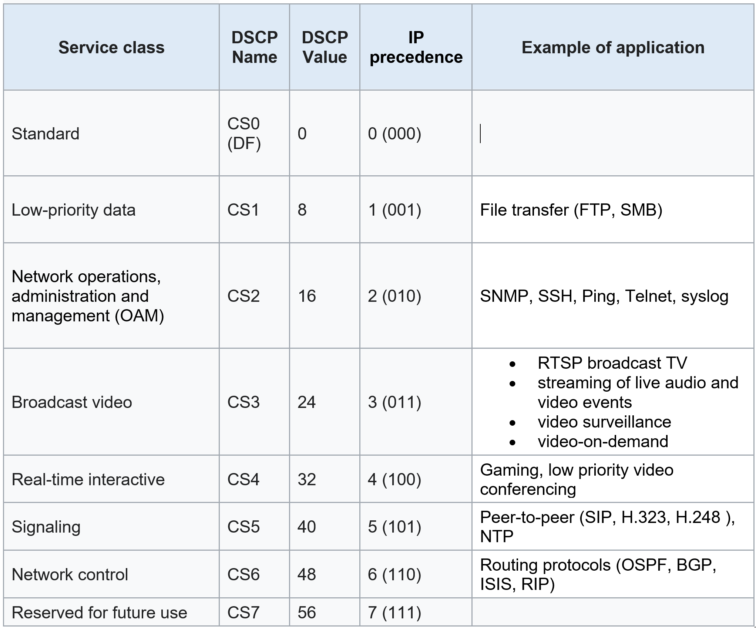

* Class Selector:

Prior to DiffServ, IPv4 networks could use the IP precedence field in the TOS byte of the IPv4 header to mark priority traffic. The TOS octet and IP precedence were not widely used. The IETF agreed to reuse the TOS octet as the DS field for DiffServ networks. In order to maintain backward compatibility with network devices that still use the Precedence field, DiffServ defines the Class Selector PHB.

The Class Selector code points are of the binary form ‘xxx000’. The first three bits are the IP precedence bits. Each IP precedence value can be mapped into a DiffServ class. IP precedence 0 maps to CS0, IP precedence 1 to CS1, and so on. If a packet is received from a non-DiffServ-aware router that used IP precedence markings, the DiffServ router can still understand the encoding as a Class Selector code point.

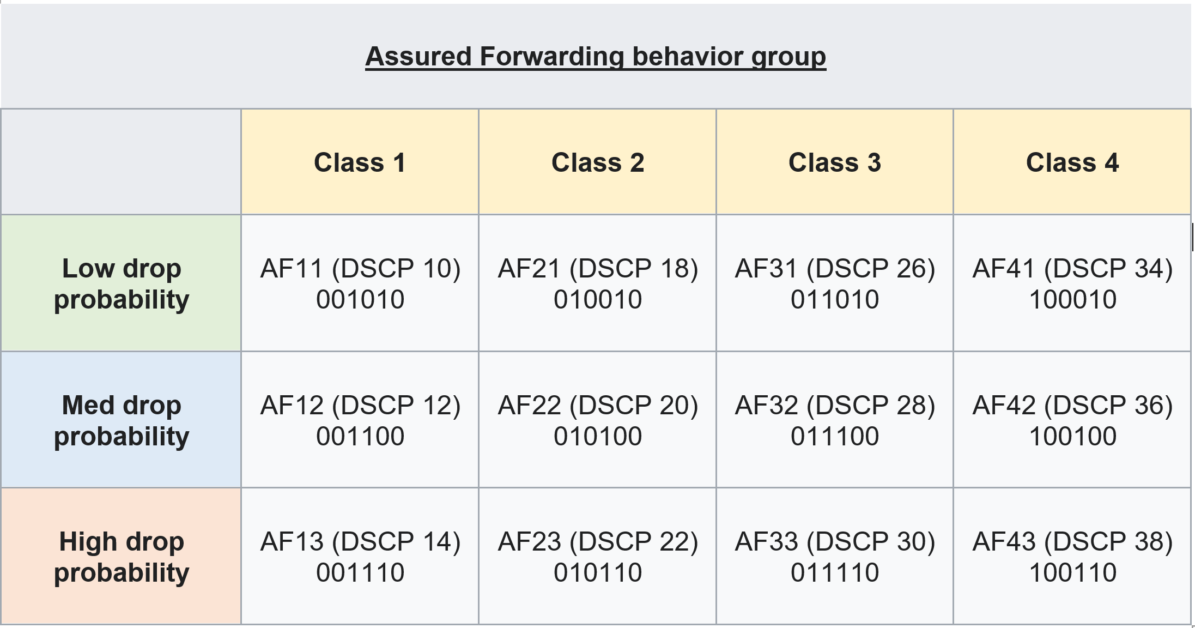

* Assured Forwarding (AF)

The AF behavior group defines four separate AF classes with all traffic within one class having the same priority. Within each class, packets are given a drop precedence (high, medium or low, where higher precedence means more dropping). The combination of classes and drop precedence yields twelve separate DSCP encodings from AF11 through AF43:

Should congestion occur between classes, the traffic in the higher class is given priority. If congestion occurs within a class, the packets with the higher drop precedence are discarded first.

II- Queuing

- Once traffic is marked, it is placed in different queues based on its priority level. Queuing techniques can vary based on the network device and the queuing algorithm used.

- QoS queuing involves dividing network traffic into different queues and applying specific policies to each queue to ensure that each application or user receives the appropriate level of service.

- Queuing algorithms determine how packets are placed in different queues and how they are serviced from those queues, based on different parameters such as priority, class, or weight.

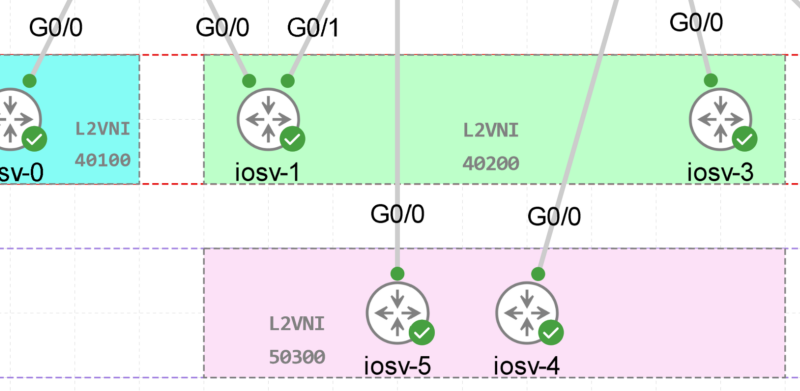

Where Queues are applied:

- A queue is used to store traffic until it can be processed or serialized. Both switch and router interfaces have ingress queues and egress queues.

- An ingress queue stores packets until the switch or router can forward the data to the appropriate interface. An egress queue stores packets until the switch or router can serialize the data onto the physical wire.

The following are the most connon of QoS queuing algorithms:

- Class-Based Weighted Fair Queuing (CBWFQ): This queuing algorithm classifies traffic into different queues based on pre-defined class maps and assigns weights to each queue to control the bandwidth allocation.

- Low Latency Queuing (LLQ): This algorithm is an extension of CBWFQ and assigns strict priority to delay-sensitive traffic like voice and video, ensuring low latency and jitter for these applications.

- Weighted Round Robin (WRR): WRR algorithm assigns a weight to each queue and serves packets in a round-robin fashion, where the weight of the queue determines the number of packets it receives before the next queue is served.

III- QoS Scheduling

- Scheduling algorithms determine the order in which packets are transmitted from a particular queue. Scheduling algorithms decide which packets are transmitted first and which packets have to wait for their turn based on the queue management policies.

Different scheduling algorithms can be used, as examples:

- Round Robin

- Deficit Round Robin

- Deficit Weight Round Robin

IV- Congestion Avoidance

Congestion avoidance monitors the network traffic loads in order to be proactive and avoid congestion before it becomes a problem.

These techniques are designed to provide preferential treatment for high priority class traffic under congestion situations while concurrently maximizing network throughput and capacity utilization and minimizing packet loss and delay.

Cisco

The most common Congestion Avoidance Technique are:

- WRED: WRED uses a weighted approach to determine which packets to drop. The weights are defined based on the packet’s size and priority.

The packets with the lowest priority are dropped first, while packets with higher priority are dropped later. This helps to ensure that the network is able to prioritize important traffic and reduce the amount of unnecessary traffic.

- DWRED: DWRED is an extension of the Weighted Random Early Detection (WRED) mechanism that dynamically adjusts the drop probability based on the amount of traffic in the network.

In DWRED, the drop probability for each packet is calculated dynamically based on the average queue length of the network. The drop probability is higher when the average queue length is high, and lower when the average queue length is low.

V- QoS Traffic Policing and Shaping

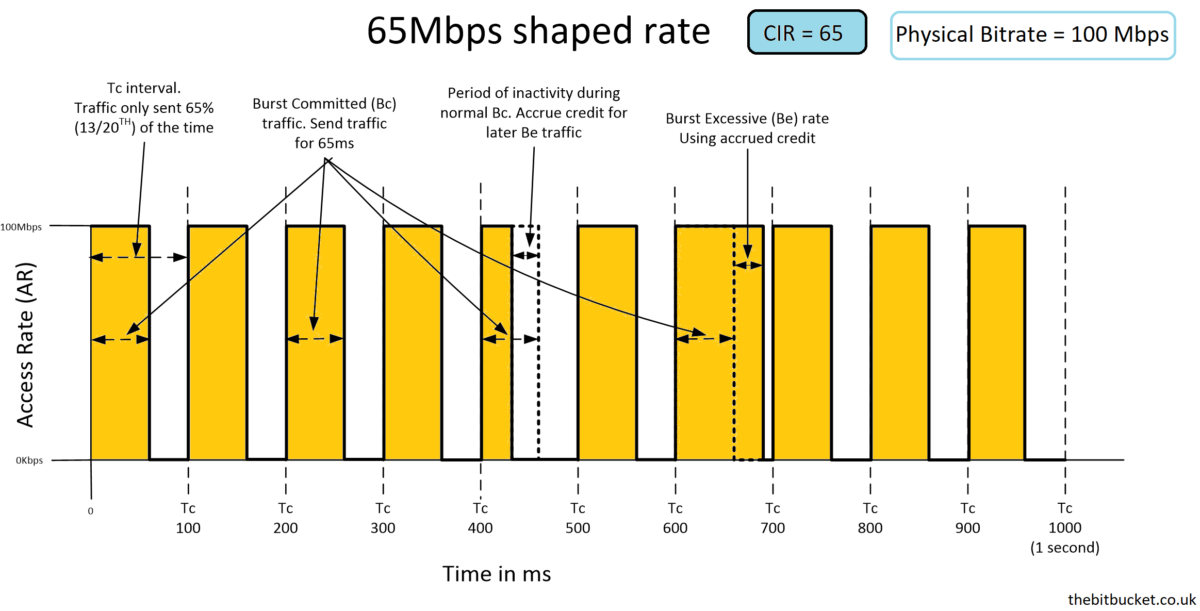

Shaping:

- Traffic shaping is used to guarantee performance, improve latency, or increase usable bandwidth for the prioritized packets by delaying other packets.

- Traffic shaping retains excess packets in a queue and then schedules the excess for later transmission over increments of time. The result of traffic shaping is a smoothed packet output rate.

- Shaping implies the existence of a queue and of sufficient memory to buffer delayed packets, while policing does not. Queues are an outbound concept; packets that leave an interface get queued and can be shaped.

- CIR (committed information rate) is the bitrate that is defined in the shaping policy (according to the target bandwidth)

- Tc (time interval) is the time interval in ms over which we can send the Bc (committed burst).

In our case, tc equal100 ms - Bc (committed burst) is the amount of traffic that we can send during the Tc (time interval) according to the defined CIR and is measured in bits.

Let’s do some math for it:

In our case, the CIR= 65 and Tc= 100 ms ,

Number of Tc in 1sec = 1000 /100 = 10,

So our Bc=65Mbps / 10 = 6500 000 bits (6.5 Mbps)

- In summary , Number of Tc in sec x Bc = CIR –> 10 x 6.5 Mbps = 65 Mbps

Policing:

Policing can be used to control the amount of traffic that enters a network or a specific part of a network, and to drop or mark packets that do not conform to the specified QoS parameters.

In QoS policing, traffic is compared to a set of predefined rules or policies, and packets that do not comply with these rules are either discarded or marked with a lower priority. This helps to ensure that the traffic flow is regulated and that the network resources are used efficiently.

References:

- routeralley.com

- en.wikipedia.org/wiki/Differentiated_services

- Cisco.com

- intechopen.com/chapters/9717

![Explore The BGP Path Selection Attributes [Explained with Labs]](https://learnduty.com/wp-content/uploads/2022/07/image-28-800x450.png)