VxLAN Explained

Contents

What is VxLAN:

VXLAN provides the same Ethernet Layer 2 network services as VLAN does today, but with greater

extensibility and flexibility. It is a Layer 2 overlay scheme over a Layer 3 network. It uses MAC

Address-in-User Datagram Protocol (MAC-in-UDP) encapsulation to provide a means to extend Layer 2

segments across the core network. VXLAN is a solution to support a flexible, large-scale multitenant

environment over a shared common physical infrastructure. The transport protocol over the core network is

IP plus UDP. Compared to VLAN, VXLAN offers the following benefits:

- Flexible placement of multitenant segments throughout the data center: It provides a solution to extend

Layer 2 segments over the underlying shared network infrastructure so that tenant workload can be placed

across physical pods in the data center

- Higher scalability to address more Layer 2 segments: VLANs use a 12-bit VLAN ID to address Layer

2 segments, which results in limiting scalability of only 4094 VLANs. VXLAN uses a 24-bit segment

ID known as the VXLAN network identifier (VNID), which enables up to 16 million VXLAN segments

to co-exist in the same administrative domain.

- Better utilization of available network paths in the underlying infrastructure: VLAN uses the Spanning

Tree Protocol for loop prevention, which ends up not using half of the network links in a network by

blocking redundant paths. In contrast, VXLAN packets are transferred through the underlying network

based on its Layer 3 header and can take complete advantage of Layer 3 routing, equal-cost multipath

(ECMP) routing, and link aggregation protocols to use all available paths.

How Vxlan Works:

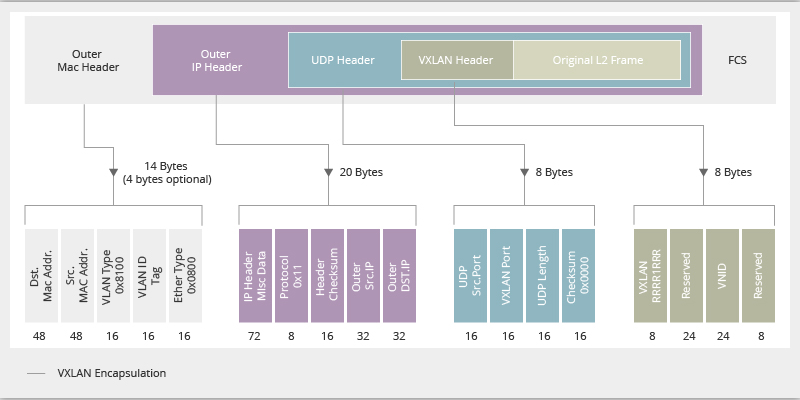

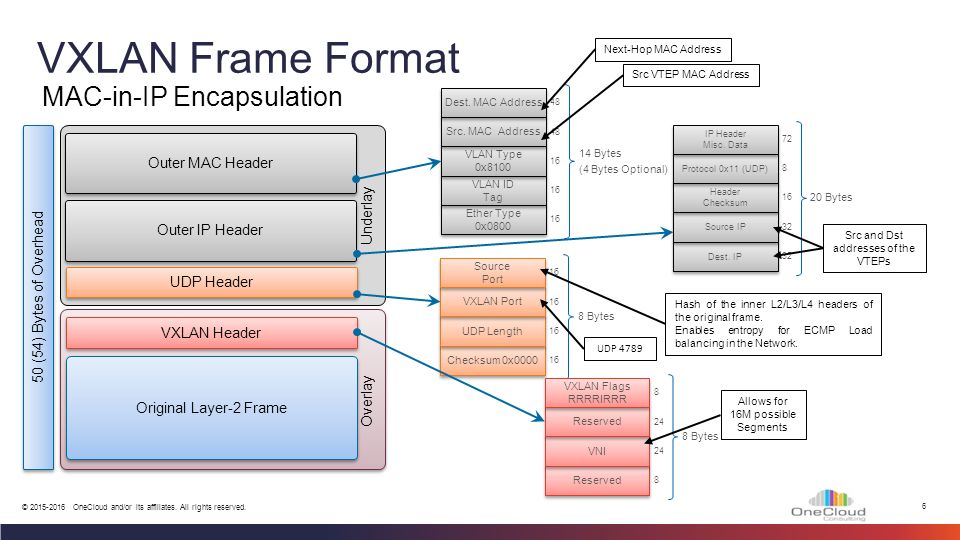

VXLAN defines a MAC-in-UDP encapsulation scheme where the original Layer 2 frame has a VXLAN

header added and is then placed in a UDP-IP packet. With this MAC-in-UDP encapsulation, VXLAN tunnels

Layer 2 network over Layer 3 network. The VXLAN packet format is shown in the following figure.

As shown in the above figure, VXLAN introduces an 8-byte VXLAN header that consists of a 24-bit VNID

and a few reserved bits. The VXLAN header together with the original Ethernet frame goes in the UDP

payload. The 24-bit VNID is used to identify Layer 2 segments and to maintain Layer 2 isolation between

the segments. With all 24 bits in VNID, VXLAN can support approx 16 million LAN segments.

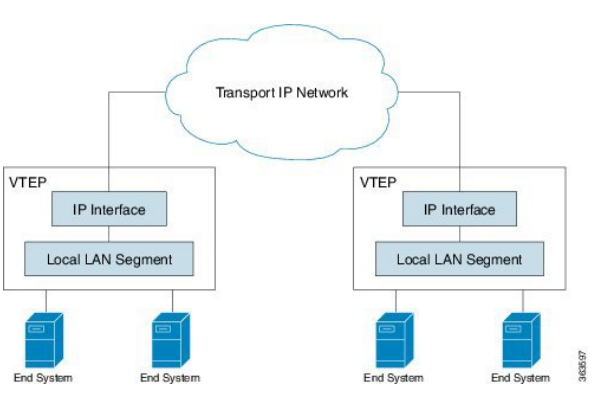

VXLAN Tunnel Endpoint

VXLAN uses VXLAN tunnel endpoint (VTEP) devices to map tenants’ end devices to VXLAN segments

and to perform VXLAN encapsulation and de-encapsulation. Each VTEP function has two interfaces: One

is a switch interface on the local LAN segment to support local endpoint communication through bridging,

and the other is an IP interface to the transport IP network.

The IP interface has a unique IP address that identifies the VTEP device on the transport IP network known

asthe infrastructure VLAN. The VTEPdevice usesthisIPaddressto encapsulate Ethernet frames and transmits

the encapsulated packets to the transport network through the IP interface. A VTEP device also discovers the

remote VTEPs for its VXLAN segments and learns remote MAC Address-to-VTEP mappings through its IP

interface. The functional components of VTEPs and the logical topology that is created for Layer 2 connectivity

across the transport IP network is shown in the following figure.

The VXLAN segments are independent of the underlying network topology; conversely, the underlying IP

network between VTEPs is independent of the VXLAN overlay. It routes the encapsulated packets based on

the outer IP address header, which has the initiating VTEP as the source IP address and the terminating VTEP

as the destination IP address.

VxLAN Packet forwarding

VXLAN Control Plane Options

VXLAN scales based on how well VTEP devices handle broadcast, unknown unicast, and multicast (BUM) traffic. VTEP devices use host MAC address to VTEP IP address mappings to forward encapsulated frames across the IP transport network. A control plane has to take care for VTEP device discovery and the population of the mapping tables.

VXLAN forwards BUM traffic using a multicast forwarding tree or ingress replication, which can be static ingress replication or Multiprotocol Border Gateway Protocol Ethernet Virtual Private Network (MP-BGP EVPN) ingress replication.

With static ingress replication, remote peers are statically configured, whereas in contrast with VXLAN EVPN ingress replication these IP addresses are exchanged between VTEPs through the BGP EVPN control plane. In both cases multi-destination packets are encapsulated using unicast and delivered to each of the statically configured or dynamically discovered remote peers.

Static ingress replication and MP-BGP EVPN ingress replication do not require any IP multicast routing in the underlay.

1- VxLAN Static Ingress Replication

VXLAN uses flooding and dynamic MAC address learning to transport broadcast, unknown unicast, and multicast traffic. VXLAN forwards these traffic types using a multicast forwarding tree or ingress replication.

With static ingress replication:

- Remote peers are statically configured.

- Multi-destination packets are unicast encapsulated and delivered to each of the statically configured remote peers.

Note Note | Cisco NX-OS supports multiple remote peers in one segment and also allows the same remote peer in multiple segments. |

Static Ingress Replication Configuration:

| Command or Action | Purpose | |

|---|---|---|

| Step 1 | configuration terminal | Enters global configuration mode. |

| Step 2 | interface nve x | Creates a VXLAN overlay interface that terminates VXLAN tunnels.Note Only 1 NVE interface is allowed on the switch. |

| Step 3 | member vni [vni-id | vni-range] | Maps VXLAN VNIs to the NVE interface. |

| Step 4 | ingress-replication protocol static | Enables static ingress replication for the VNI. |

| Step 5 | peer-ip n.n.n.n | Enables peer IP. |

Check This example of VXLAN Static ingress replication example.

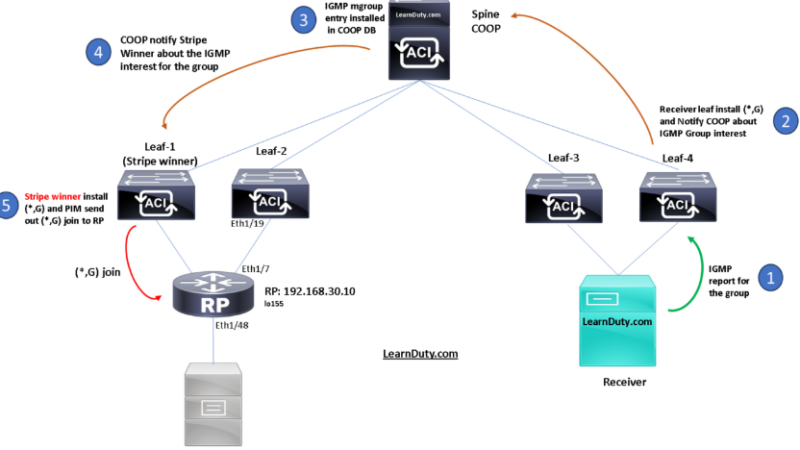

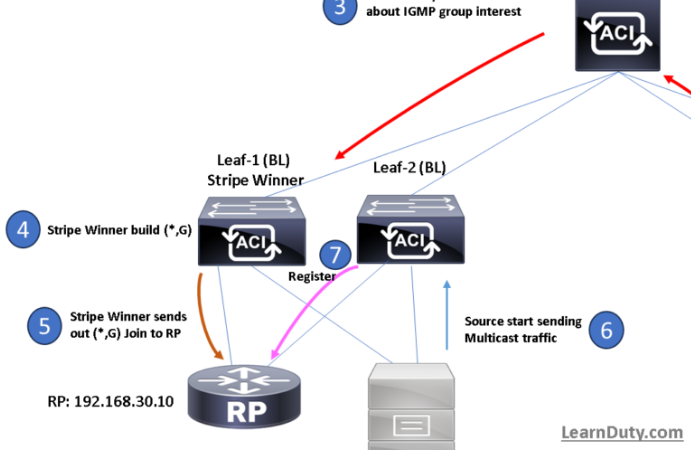

2- VXLAN Control Plane Using BIDIR-PIM:

When VXLAN uses BIDIR-PIM, VTEPs map each VNI to an IP multicast group in the transport IP network. You would configure each VTEP device independently and then the device will join this multicast group as an IP host through the Internet Group Management Protocol (IGMP). The IGMP join packets trigger PIM join packets and signaling through the transport network for the particular multicast group. PIM builds the multicast distribution tree for this group through the transport network based on the locations of the participating VTEPs.

VXLAN uses the multicast group to transmit the BUM traffic through the IP network. The use of multicast limits Layer 2 flooding to those devices that have end systems participating in the same VXLAN segment. Devices transmit unicast traffic from one VTEP to another using the unicast address of a VTEP.

Check VxLAN Bidir PIM Configuration.

3- VxLAN BGP EVPN:

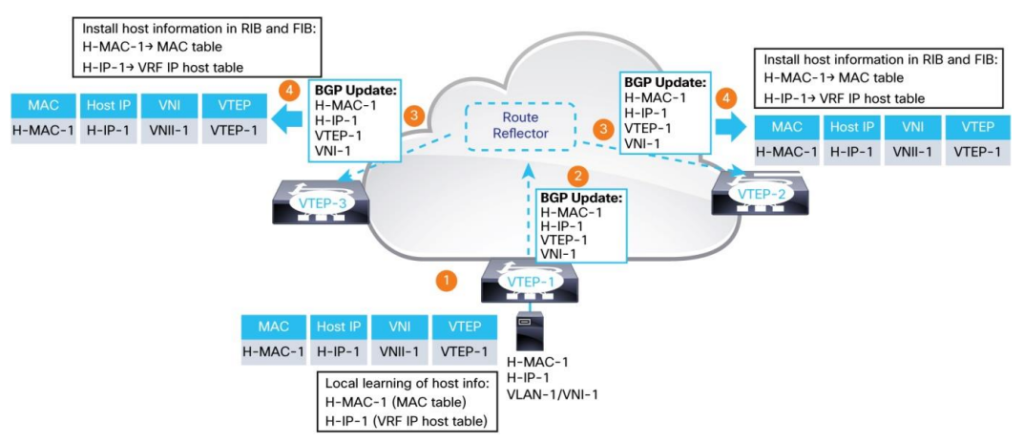

MP-BGP EVPN is a control protocol for VXLAN based on industry standards. Prior to EVPN, VXLAN overlay networks operated in the flood-and-learn mode. In this mode, end-host information learning and VTEP discovery are both data plane driven, with no control protocol to distribute end-host reachability information among VTEPs. MP-BGP EVPN changes this model. It introduces control-plane learning for end hosts behind remote VTEPs.

It provides control-plane and data-plane separation and a unified control plane for both Layer-2 and Layer-3 forwarding in a VXLAN overlay network.

A VTEP in MP-BGP EVPN learns the MAC addresses and IP addresses of locally attached end hosts through local learning. This learning can be local-data-plane based using the standard Ethernet and IP learning procedures, such as source MAC address learning from the incoming Ethernet frames and IP address learning when the hosts send Gratuitous ARP (GARP) and Reverse ARP (RARP) packets or ARP requests for the gateway IP address on the VTEP. Alternatively, the learning can be achieved by using a control plane or through management-plane integration between the VTEP and the local hosts.

EVPN Route Advertisement and Remote-Host Learning

After learning the local-host MAC and IP addresses, a VTEP advertises the host information in the MP-BGP EVPN control plane so that this information can be distributed to other VTEPs. This approach enables EVPN VTEPs to learn the remote end hosts in the MP-BGP EVPN control plane.

The EVPN routes are advertised through the L2VPN EVPN address-family. The BGP L2VPN EVPN routes include the following information:

● RD: Route distinguisher

● MAC address length: 6 bytes

● MAC address: Host MAC address

● IP address length: 32 or 128

● IP address: Host IP address (IPv4 or IPv6)

● L2 VNI: VNI of the bridge domain to which the end host belongs

● L3 VNI: VNI associated with the tenant VRF routing instance

MP-BGP EVPN uses the BGP extended community attribute to transmit the exported route-targets in an EVPN route. When an EVPN VTEP receives an EVPN route, it compares the route-target attributes in the received route to its locally configured route-target import policy to decide whether to import or ignore the route. This approach uses the decade-old MP-BGP VPN technology (RFC 4364) and provides scalable multitenancy in which a node that does not have a VRF locally does not import the corresponding routes. VPN scaling can be further enhanced by the use of BGP constructs such as route-target-constrained route distribution (RFC 4684).

When a VTEP switch originates MP-BGP EVPN routes for its locally learned end hosts, it uses its own VTEP address as the BGP next-hop. This BGP next-hop must remain unchanged through the route distribution across the network because the remote VTEP must learn the originating VTEP address as the next-hop for VXLAN encapsulation when forwarding packets for the overlay network.

The underlay network provides IP reachability for all the VTEP addresses that are used to route the encapsulated VXLAN packets toward the egress VTEP through the underlay network. The network devices in the underlay network need to maintain routing information only for the VTEP addresses. They don’t need to learn the EVPN routes. This approach simplifies the underlay network operation and increases its stability and scalability.

Check BGP EVPN Configuration

Anycast Gateway:

Reference:

https://community.fs.com/fr/blog/nvgre-vs-vxlan-whats-the-difference.html

https://www.cisco.com/c/en/us/td/docs/switches/datacenter/nexus9000/sw/7-x/vxlan/configuration/guide/b_Cisco_Nexus_9000_Series_NX-OS_VXLAN_Configuration_Guide_7x/b_Cisco_Nexus_9000_Series_NX-OS_VXLAN_Configuration_Guide_7x_chapter_011.html

![Explore The BGP Path Selection Attributes [Explained with Labs]](https://learnduty.com/wp-content/uploads/2022/07/image-28-800x450.png)